How Top Engineers Stop AI Slop : Hooks, Gates & Hard Blocks

Introduction to AI Slop and its Consequences

Artificial Intelligence (AI) has revolutionized the way we approach complex problems, automating tasks, and providing insights that were previously unimaginable. However, as AI systems become more pervasive, the issue of AI slop has become increasingly significant. AI slop refers to the phenomenon where AI models, particularly those based on machine learning and deep learning, produce suboptimal or incorrect results due to various reasons such as poor data quality, inadequate training, or inherent biases in the algorithms. The consequences of AI slop can be far-reaching, ranging from minor inconveniences to critical system failures, and even ethical dilemmas. Top engineers have been working tirelessly to develop innovative solutions to mitigate AI slop, leveraging techniques such as hooks, gates, and hard blocks to ensure the reliability and trustworthiness of AI systems.

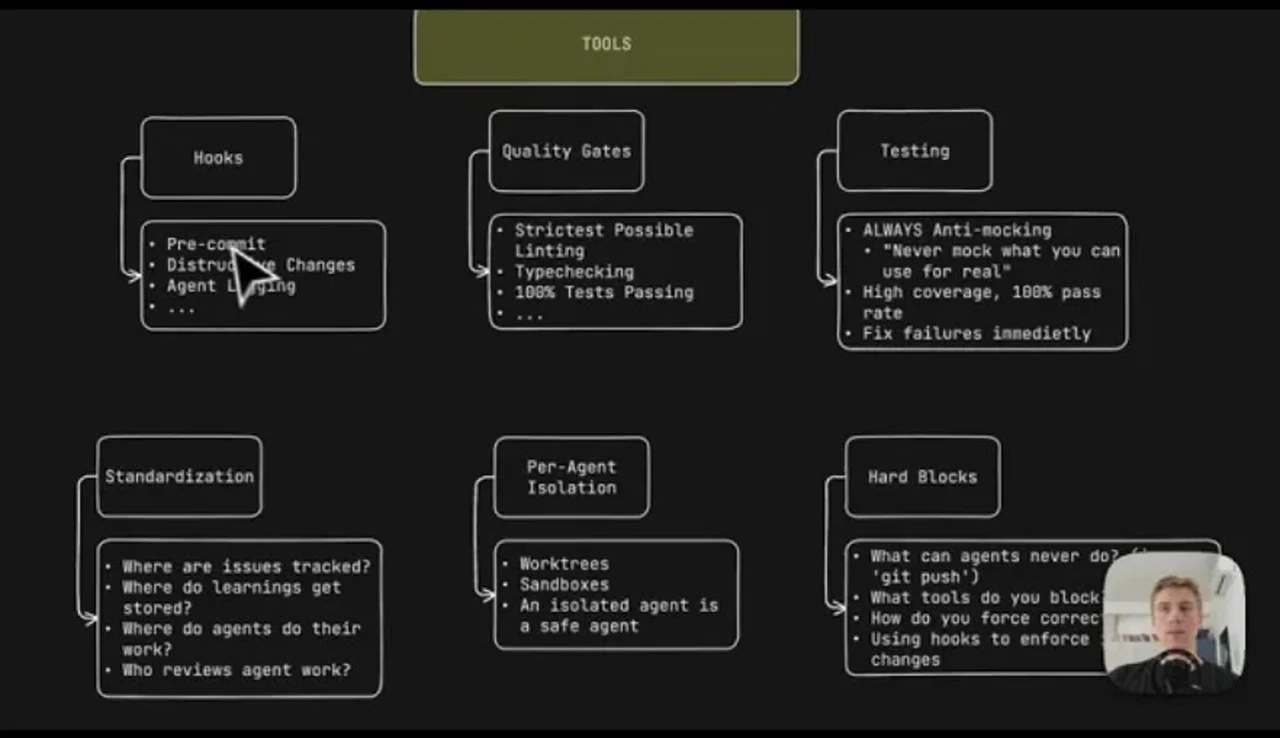

Understanding Hooks in AI Systems

Hooks are a fundamental concept in software development, allowing developers to inject custom code at specific points within a program's execution flow. In the context of AI, hooks can be used to intercept and modify the behavior of AI models, enabling engineers to detect and correct potential issues before they escalate into full-blown AI slop. There are various types of hooks that can be employed in AI systems, including data hooks, model hooks, and inference hooks. Data hooks are used to preprocess and validate input data, ensuring that it meets the required standards for AI model training and deployment. Model hooks, on the other hand, allow engineers to inspect and modify the internal state of AI models, detecting potential biases or anomalies that could lead to AI slop. Inference hooks are used to monitor and control the output of AI models, preventing incorrect or misleading results from being generated.

One of the key benefits of using hooks in AI systems is that they provide a flexible and non-intrusive way to debug and optimize AI models. By injecting custom code at strategic points within the AI pipeline, engineers can identify and address issues without modifying the underlying AI algorithms or models. This approach enables the development of more robust and reliable AI systems, capable of withstanding the complexities and uncertainties of real-world applications. Moreover, hooks can be used to implement explainability and transparency mechanisms, providing insights into the decision-making processes of AI models and facilitating the identification of potential biases or errors.

Gates and their Role in Preventing AI Slop

Gates are another crucial technique used by top engineers to prevent AI slop. Gates refer to the implementation of conditional checks and validation mechanisms within AI systems, ensuring that AI models operate within predefined boundaries and constraints. Gates can be used to control the flow of data, preventing incorrect or incomplete information from being processed by AI models. They can also be used to regulate the output of AI models, blocking or modifying results that do not meet specific criteria or thresholds. By incorporating gates into AI systems, engineers can prevent AI slop by detecting and correcting errors, anomalies, or biases that could compromise the performance and reliability of AI models.

There are various types of gates that can be employed in AI systems, including data gates, model gates, and inference gates. Data gates are used to validate and preprocess input data, ensuring that it meets the required standards for AI model training and deployment. Model gates, on the other hand, allow engineers to inspect and modify the internal state of AI models, detecting potential biases or anomalies that could lead to AI slop. Inference gates are used to monitor and control the output of AI models, preventing incorrect or misleading results from being generated. By combining gates with hooks, engineers can develop robust and reliable AI systems that are capable of detecting and correcting errors, anomalies, or biases in real-time.

Hard Blocks and their Impact on AI Slop

Hard blocks refer to the implementation of strict constraints and limitations within AI systems, preventing AI models from operating outside predefined boundaries or engaging in undesirable behavior. Hard blocks can be used to regulate the complexity of AI models, preventing them from becoming overly complicated or difficult to interpret. They can also be used to control the amount of data processed by AI models, preventing information overload or undue influence from specific data sources. By incorporating hard blocks into AI systems, engineers can prevent AI slop by ensuring that AI models operate within well-defined parameters and constraints, reducing the risk of errors, anomalies, or biases.

💻 Technical Breakdown Video

One of the key benefits of using hard blocks in AI systems is that they provide a robust and reliable way to prevent AI slop. By imposing strict constraints and limitations on AI models, engineers can prevent them from engaging in undesirable behavior or producing suboptimal results. Hard blocks can also be used to implement safety mechanisms, preventing AI systems from causing harm or damage to humans, animals, or the environment. Moreover, hard blocks can facilitate the development of more transparent and explainable AI systems, providing insights into the decision-making processes of AI models and enabling engineers to identify potential biases or errors.

2026 Innovation and the Future of AI

The field of AI is rapidly evolving, with new innovations and breakthroughs emerging every year. In 2026, we can expect significant advancements in areas such as explainability, transparency, and reliability, enabling the development of more robust and trustworthy AI systems. One of the key trends in 2026 will be the increasing use of hooks, gates, and hard blocks to prevent AI slop and ensure the reliability of AI models. Engineers will leverage these techniques to develop more sophisticated and resilient AI systems, capable of withstanding the complexities and uncertainties of real-world applications.

Another significant trend in 2026 will be the growing importance of edge AI, where AI models are deployed on edge devices such as smartphones, smart home devices, or autonomous vehicles. Edge AI will require the development of more efficient and lightweight AI models, capable of operating in resource-constrained environments. Engineers will need to use hooks, gates, and hard blocks to optimize and validate edge AI models, ensuring that they operate within predefined boundaries and constraints. Moreover, the increasing use of edge AI will drive the development of more transparent and explainable AI systems, providing insights into the decision-making processes of AI models and facilitating the identification of potential biases or errors.

Conclusion and Future Directions

In conclusion, the issue of AI slop is a significant concern in the development and deployment of AI systems. Top engineers have been working tirelessly to develop innovative solutions to mitigate AI slop, leveraging techniques such as hooks, gates, and hard blocks to ensure the reliability and trustworthiness of AI models. As the field of AI continues to evolve, we can expect significant advancements in areas such as explainability, transparency, and reliability, enabling the development of more robust and trustworthy AI systems. The use of hooks, gates, and hard blocks will play a critical role in preventing AI slop and ensuring the reliability of AI models, particularly in edge AI applications where efficiency, transparency, and explainability are crucial.

Future research directions will focus on developing more sophisticated and resilient AI systems, capable of withstanding the complexities and uncertainties of real-world applications. Engineers will need to develop more advanced techniques for detecting and correcting errors, anomalies, or biases in AI models, leveraging hooks, gates, and hard blocks to prevent AI slop. Moreover, the increasing use of edge AI will drive the development of more efficient and lightweight AI models, requiring the use of innovative techniques such as pruning, quantization, and knowledge distillation to optimize and validate AI models. As the field of AI continues to evolve, we can expect significant breakthroughs in areas such as explainability, transparency, and reliability, enabling the development of more robust and trustworthy AI systems that can be deployed in a wide range of applications, from healthcare and finance to transportation and education.

About Menshly Tech

Documenting the intersection of human creativity and autonomous systems. Part of the Menshly Digital Media Group.

Follow Author

0 Comments